Registration for the challenge is now closed. Registered participants would be sent a link for the test data and related instructions, as per the schedule below.

Detection of the region of interest (ROI) in images in one of the fundamental tasks considered in various domains (e.g. faces for biometric applications, human body for activity analysis, specific objects for human-computer interaction etc.).

A similar ROI detection task can be considered to be important in domains involving natural environments. In such a case, there are some important concerns such as the object occupying a small region relative to the background, the background variation due to different environments, and object variations in terms of shape, size, colour and texture.

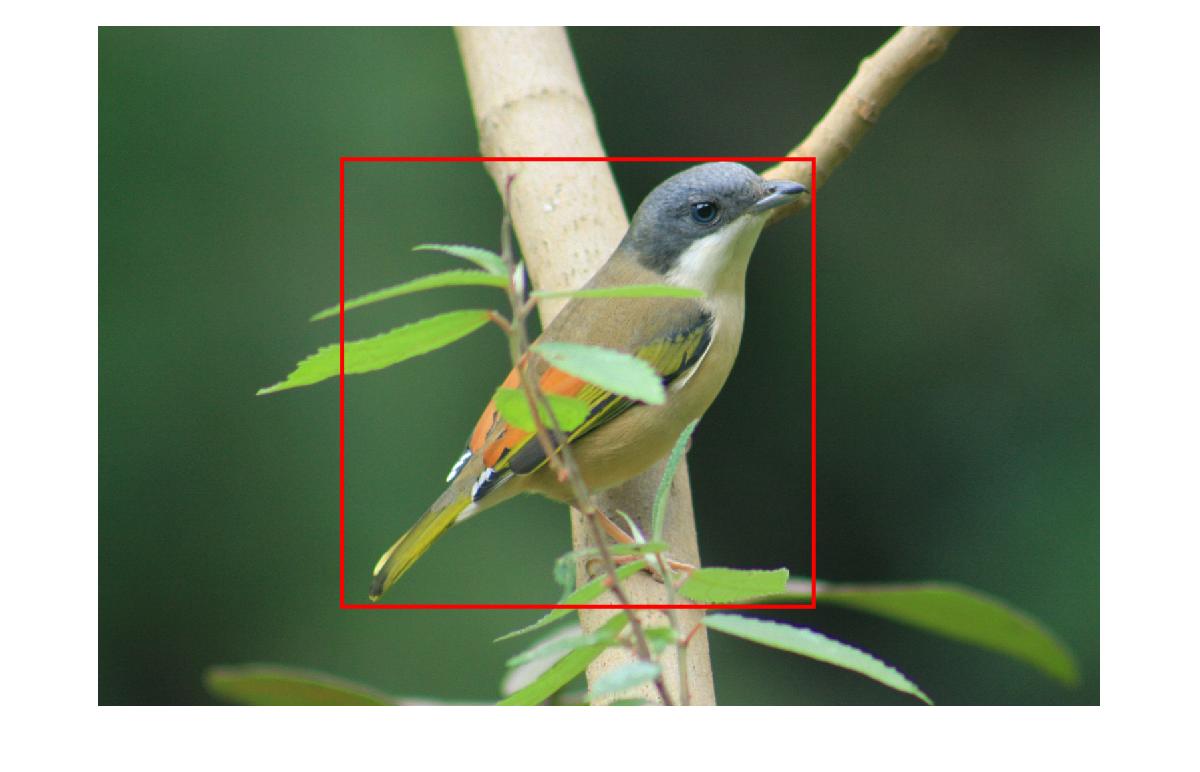

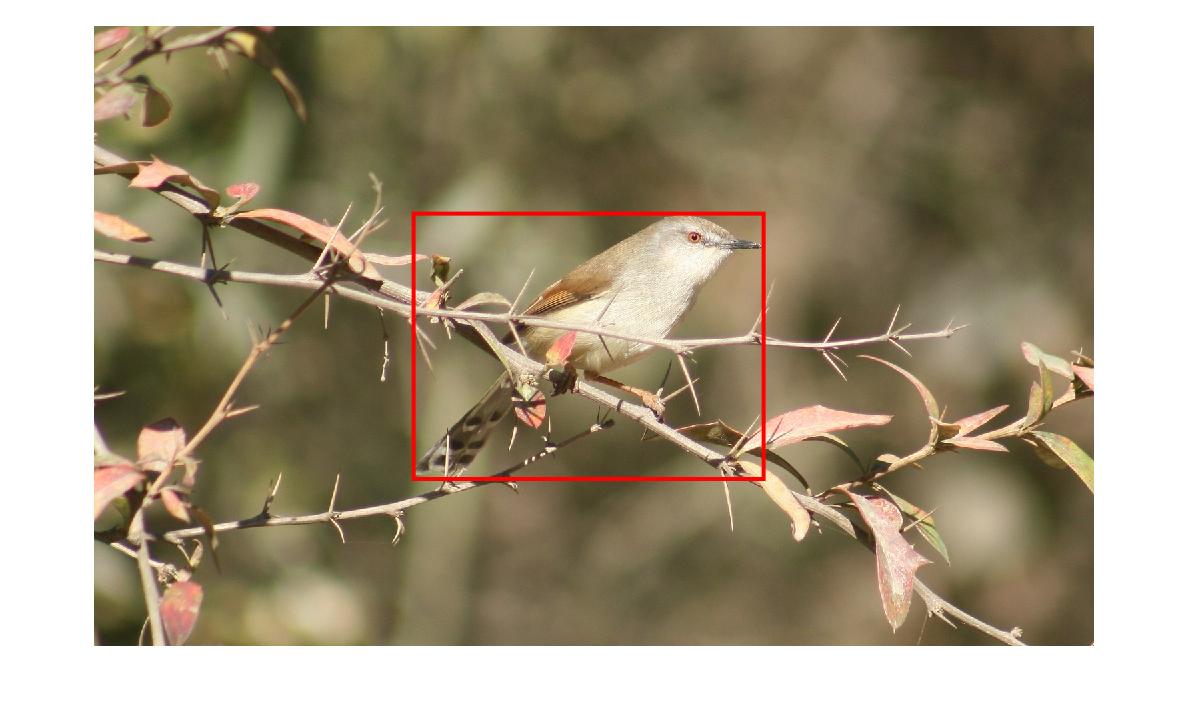

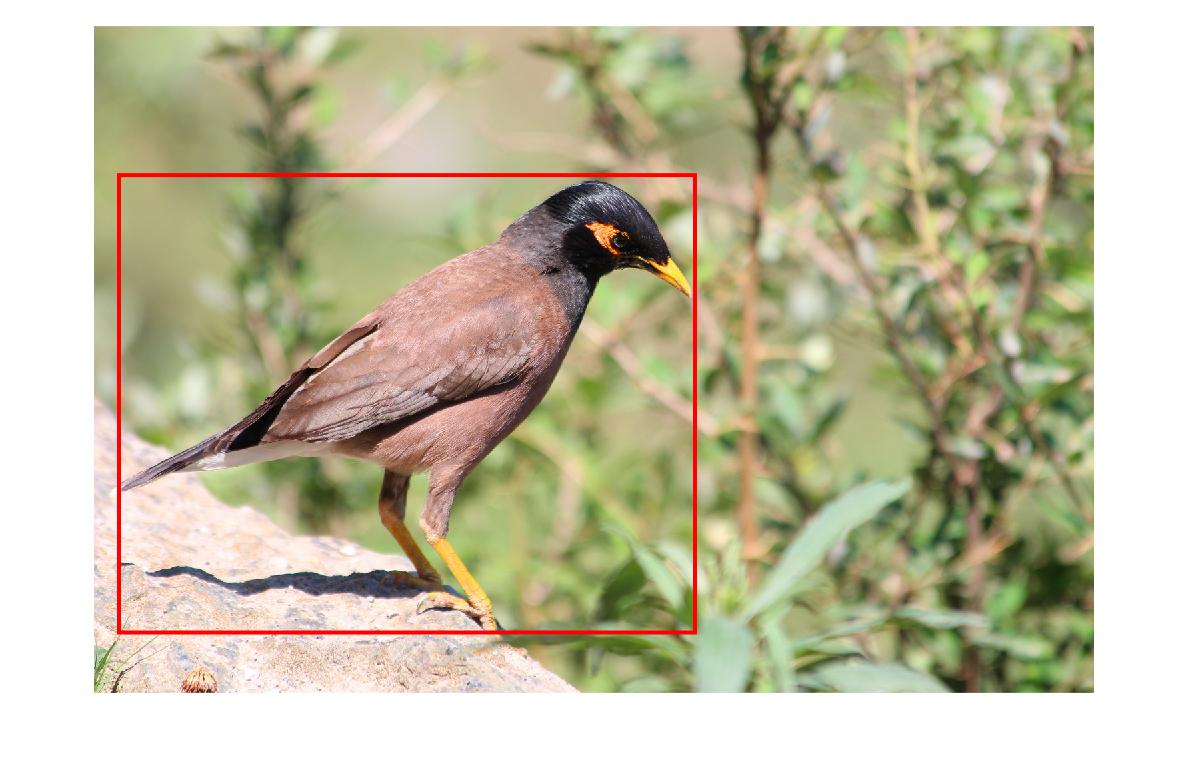

In this challenge we consider the ROI detection for bird images, which are captured in an uncontrolled environment. As in other detection problems, the task is to develop algorithms for computing a precise bounding box around the region containing the bird. See the figures below for some examples. The details of the training and testing process is given below:

Training and validation:

A set of 325 images is provided for training, along with a text file containing the coorindinates for manually labeled bounding boxes. To develop and validate the algorithms the participants should only use the images in the training set. The ratio of the number of images used for training and validation can be decided by the participants.

The ROI detection task can be considered in two categories, and the entries will be evaluated accordingly.

a) Supervised: The participants can use some of all of the training images and the provided bounding box coordinates for training and validation, in order to develop their algorithms.

b) Unsupervised: The participants can only use the training images (but should not use the bounding box coordinates) for developing their algorithms.

Testing:

The testing images will be available as per the schedule below (for which a notification will be sent to all registered participants).The testing set will only consists of images, and the bounding box information will be withheld by the organizers.

After getting the ROI results on the test images, the authors need to submit their results in the same format as that of the training label file, which conntains the file names and the corresponding coordinates of the resultant bounding box. More details on the formatting of the results will be shared along with the testing data.

Note: The authors should not make any changes in the algorithms or its parameters while processing the test images. Any changes in the algorithms and its parameters can be done using only the training (and validation) data.

Extended abstract:

Along with the test results, the authors should also submit an extended abstract describing whether the work is for the supervised or the unsupervised category, their approach, experimental details and some example visual results and quantative results averaged over the overall validation set across cross validation trials. Among any other details, the experimental details should include no. of images used for training (in case of the supervised learning category) and validation, cross validation information. The quantitative validation results should be specified in terms of precision-recall and f-score, by comparing the estimated bounding box and the ground-truth bounding box. More details about the formatting of the abstract will be made available soon.

Submitting the test results and Extended abstracts:

The following must be submitted as a zip file via an email to ncvpripg2017@gmail.com.1) A text file with the test results in the same format as that provided for training labels.

Each line of the txt file should mention the following details about the detection bounding box obtained on the test images.

"image_id" "row_location_of_bounding_box" "column_location_of_bounding_box" "width" "height"

2) A code package for the detection algorithm.

We require this atleast to browse through the code to ascertain the authencity.

We might re-run the code for verfication

3) A 4-page extended abstract in the Springer LNCS format, discussing the problem (in short), the proposed algorithm and results.

The Springer LNCS format can be obtained from the submission page of the conference.

(http://ncvpripg.iitmandi.ac.in/submissions.html)

4) We would shortly update about the presentation format for the challenge session.

Registration:

Challenge participants can registration at the regular rates for the conference mentioned, as per their appropriate categoryhttp://ncvpripg.iitmandi.ac.in/registration.html

Important Dates:

Challenge registration open: Sep 27, 2017Training data release: Sep 28, 2017

Registration for participation: Oct 20, 2017

Testing data release: Nov 1, 2017

Final submission of results and extended abstract: Dec 10, 2017 Registration: December 5, 2017.